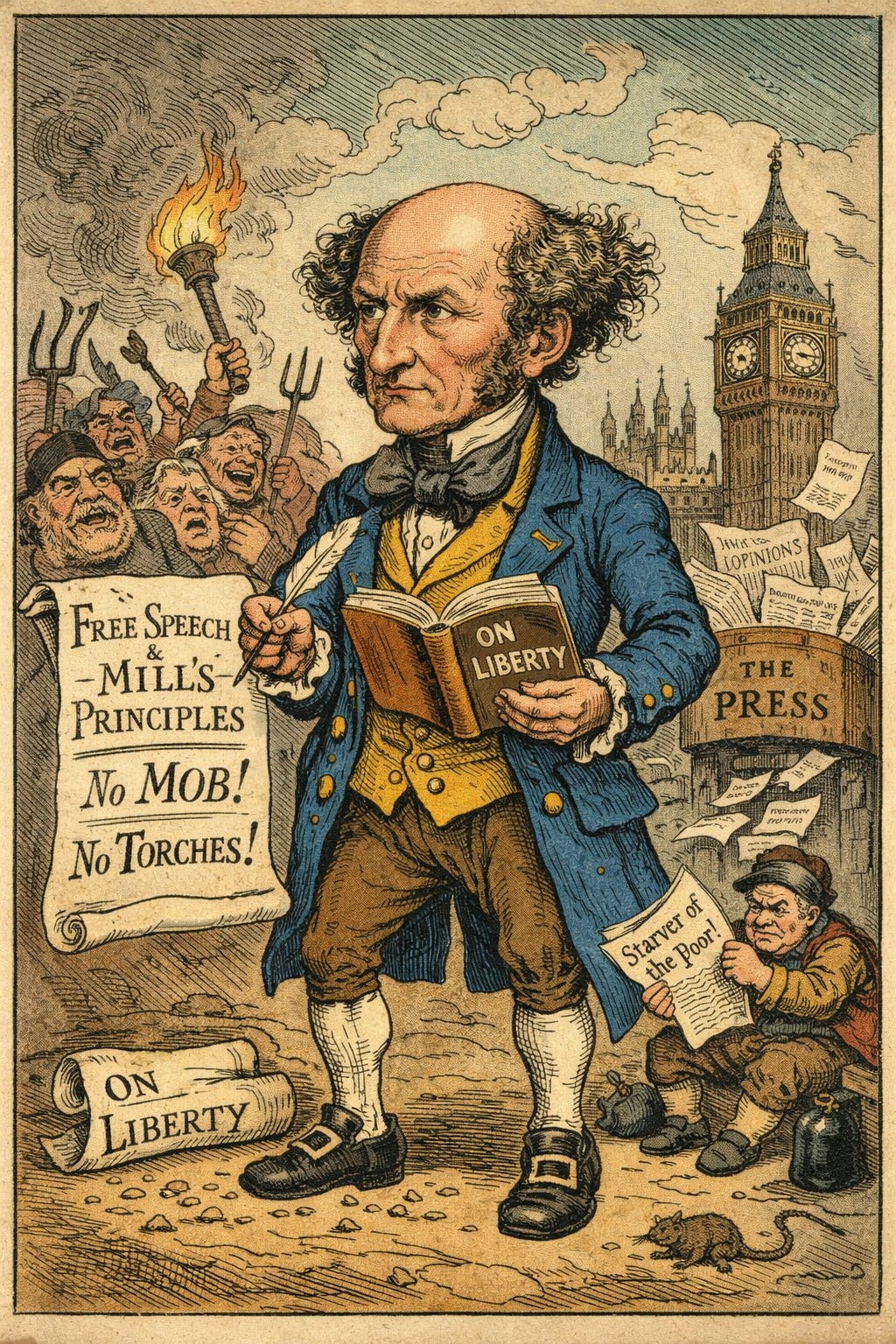

Make Britain Liberal Again

the home of free speech needs to recover its principles

18 months for two tweets

A British man has been sent to jail for 18 months because of two tweets that were said to be inciting racial hatred. The prosecution argued that his tweets had the “potential to trigger discord.”

What Luke Yarwood said was awful. No doubt about that. Calling for migrant hotels to be burned down and people to be slaughtered in the street is abhorrent.

But Yarwood should not have got a prison sentence.

Some people compare tweets like this to inciting a mob. J.S. Mill himself makes this exception to free speech.

An opinion that corn-dealers are starvers of the poor, or that private property is robbery, ought to be unmolested when simply circulated through the press, but may justly incur punishment when delivered orally to an excited mob assembled before the house of a corn-dealer, or when handed about among the same mob in the form of a placard.

People who read tweets are not assembled together, not physically present outside migrant hotels, and are not being addressed as a unit. Twitter is akin to the press here, not an oral declamation outside the hotel. There was no mob in Bournemouth when Yarwood tweeted. No crowd was gathered at the hotels. (The events that prompted some of his tweets were in Germany.)

In the debate about this story, people have reshared an image of the original tweet defending the prosecution. Clearly they feel that it was safe for them to publish those words. But if you think the tweet is an incitement akin to standing in front of the mob, you would surely object to this wider sharing. Yarwood’s tweets were seen by 33 people.

One’s intent at sharing the image makes no difference if the wrong person reads the “inciting” words. If the inciting incident can be in another country from the tweeter, then the test for potential harm is quite wide.

There are ongoing tensions in Britain about migrants. How clear is it that the tweet is safe to share now but wasn’t then? Unless the threat is credible and proximate, Mill’s conditions are not met. Potential harm is too a wide criteria.

This sort of injustice is becoming normal in the UK.

When it comes to free speech, Britain is living through a sustained institutional failure. The number of people being arrested for speech offences is astonishing. Earlier this year, The Times reported:

Custody data obtained by The Times shows that officers are making about 12,000 arrests a year under section 127 of the Communications Act 2003 and section 1 of the Malicious Communications Act 1988.

The acts make it illegal to cause distress by sending “grossly offensive” messages or sharing content of an “indecent, obscene or menacing character” on an electronic communications network.

Officers from 37 police forces made 12,183 arrests in 2023, the equivalent of about 33 per day. This marks an almost 58 per cent rise in arrests since before the pandemic. In 2019, forces logged 7,734 detentions.

Policy Exchange estimates that the police spend more than 60,000 hours per year dealing with “non-crime hate-incidents”.

There are plenty more stories of how the UK is slipping into this illiberalism.

In January, six officers turned up to arrest a married couple because they made complaints about their children’s school in WhatsApp. The constabulary later had to pay £20,000 in damages.

More recently, eleven police officers are reported to have entered Elizabeth Kinney’s home while she was in the bath and arrested her. You might expect that such dramatic action would be in response to a serious crime of some sort.

But no.

Kinney had used the word “fa**ot” in a text message. Her crime was sending an “offensive, indecent, obscene or menacing” message.

For this, she was given a twelve-month community order: 72 hours of unpaid work and 10 rehabilitation activity days. She is also ordered to pay £364 in costs. This is an extraordinary way for the law to treat a single mother for the sake of a text message reported as an act of spite by a feuding friend.

And the laws are creeping into more mundane, everyday areas too.

Other speech restrictions are coming into force in the UK. Stories are coming out regularly about the effect of the new Online Safety Act, which means internet users have to verify their age before they can read something like Ed West’s history Substack. Online fiction which contains sex or violence also requires age verification. As do columns by the Free Press. I had to have my picture taken recently to be allowed to read my own direct messages in the Substack App.

If Substack doesn’t comply with these rules, it can be fined 10% of its qualifying worldwide revenue, even though it is not a British company.1 What happened to Luke Yarwood is part of a broader pattern of illiberalism.

Britain didn’t plan any of this.

It got here slowly, decision by decision. Legislation that sounds sensible at the time is later put to illiberal purposes. Each case finds people to justify the sentence. Calling for migrants to be slaughtered in the streets is a line many people don’t think should be crossed.

But the total effect will gradually become overwhelming. Injustice becomes normalise by every exception. And it erodes public trust in the law. While thousands are arrested for speech offences, in the year to June 2024, 1.9 million violent crimes were closed without being solved. That is 89% of offences. So while hundreds of thousands of violent criminals walk free, thirty people are arrested every day for speech offences.

And illiberal laws creep into ordinary life in sinister ways. The man with 18 months jail time and the single mother who sent offensive texts were both reported to the police by someone they were in a personal feud with. In one case a brother-in-law, in another case a friend.

This is what illiberal laws do. They pit friends and relatives against each other. The police won’t retrieve your stolen phone. They won’t solve millions of violent crimes. But they will send several officers to your house because your friend dobbed you in for using hate speech in a private message.

One common defence of Yarwood’s imprisonment is that he called for the killing of MPs, for the country to be taken over by force. It was once a principle of English law that words alone could be no treason. To be guilty, there had to be evidence of plotting and conspiracy—not mere words thrown out in heat. If we think—truly think—that everyone who declaims that MPs are useless and ought to be hanged should be put in jail, then there will be a lot of awkward moments at family Christmases this year.

Make Britain Liberal Again

Britain was once a bastion of free speech. It was a country that was able to tolerate nasty words that didn’t lead to nasty actions. Now that is slipping away we must be careful about what we support. It is easy to say “Do you think it’s OK to say that” or “Do you think it is OK to incite violence?” Easy to say that someone else should be locked up.

But if Britain keeps going like this, it will not like the future it makes. More and more people will use these laws to get revenge on friends and relatives. More and more people who have worse impulse control than others will suffer extreme consequences for petty acts. More and more people will think twice before they say something.

Illiberalism is starting to creep across England like a frost. Let’s hope we do not have to wait too long for the thaw, and that we don’t create too much harm in the meantime.

What qualifies as revenue is discussed here: https://www.pinsentmasons.com/out-law/news/online-safety-act-fees-penalties-revenue

Great post I really enjoyed that! It’s also worth pointing out that having these laws in the books does not guarantee that they will always be used for good reasons or that they won’t be abused.

That being said, I wonder what you think about a different way to treat the Yarwood case. So it strikes me that potential harm is too broad a category to punish speech but the practical stakes of the speech being acted upon seems relevant. So maybe “we should go flick their ear“ there’s a potential harm but the stakes of people acting on it I guess are not as high compared with saying “we should slaughter people” or something like that where the low risk of death nevertheless merits some response or consequence. Whether that’s jailable, I’m unsure.

My own take is that platforms should respond to (non-inciting) hate speech and misinformation by amplifying counterspeech. For inciting rhetoric like Yarwood’s, it strikes me that platforms should demote or maybe remove such speech, the more likely it is to cause harm. But that’s a separate point from whether it should be criminalized I suppose.

I talk about this more here if you’re curious:

https://kylevo.substack.com/p/beyond-the-gates-making-speech-free

This isn’t just illiberalism.

It’s what happens when institutions shift from punishing actions to pre-empting potential narratives under uncertainty.

Once speech is treated as upstream risk, enforcement inevitably expands because “possible harm” has no natural stopping point.